Robots are getting better at understanding the world around them. They can recognize faces, navigate complex environments, and even carry conversations. But one capability still remains extremely difficult for machines: empathy. Humans naturally read emotional cues in tone, facial expressions, word choice, and context. For artificial systems, this ability has to be learned.

Over the past decade, researchers in fields like machine learning, robotics, and computational linguistics have started exploring an unexpected source of emotional training data: social media. Platforms where millions of people openly share their thoughts, frustrations, celebrations, and fears provide a massive archive of real human emotion. When used carefully, these datasets can help train social robots to recognize emotional signals and respond in ways that feel more human.

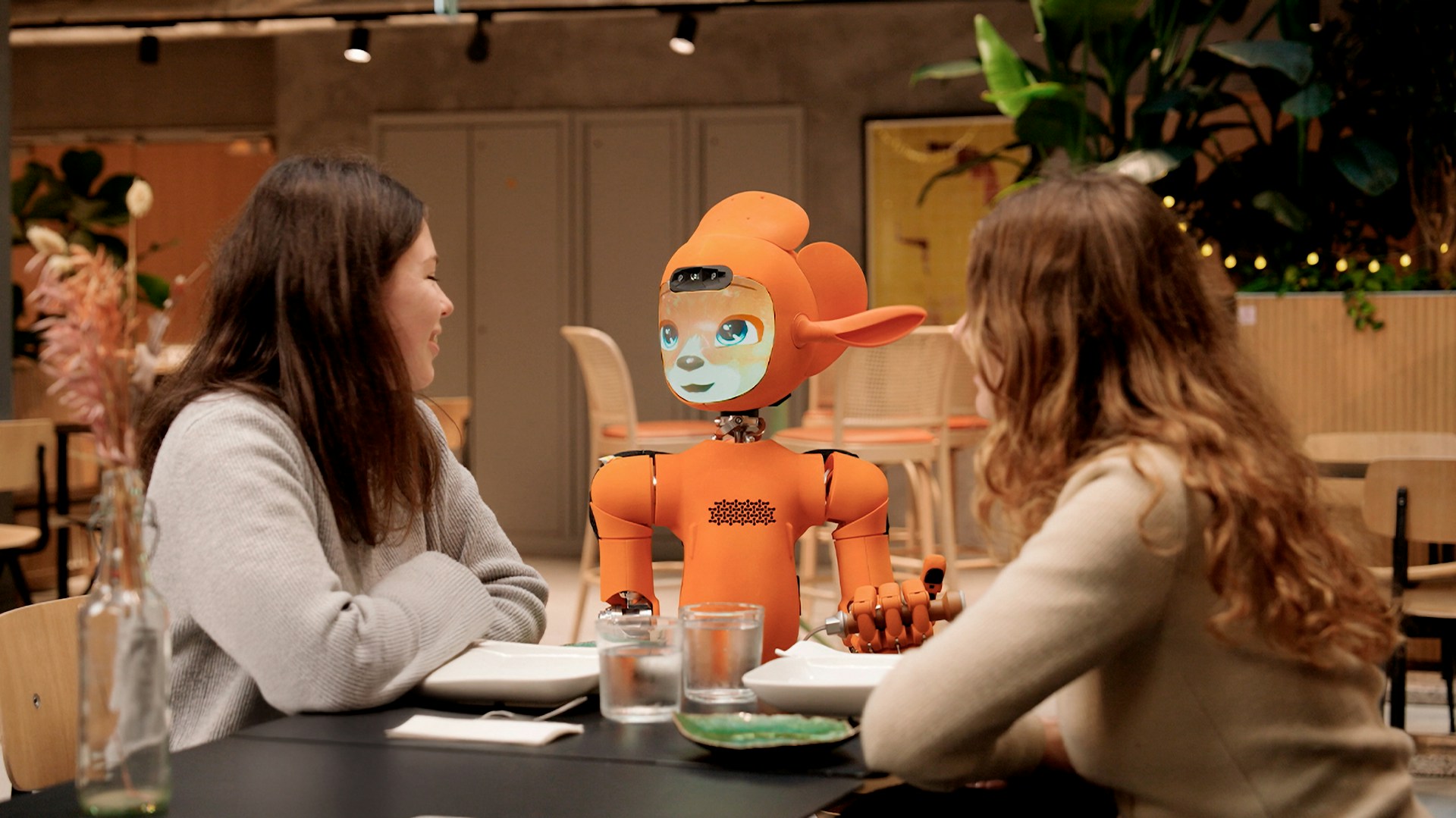

The idea is simple in principle but complex in practice. If robots are expected to assist people in homes, hospitals, classrooms, or customer service environments, they must learn how humans actually communicate — not just in formal speech, but in everyday language full of slang, humor, irony, and emotional nuance.

The Rise of Social Robots

The concept of a robot capable of social interaction has been explored for decades, but only recently have the necessary technologies matured. Advances in natural language processing (NLP), deep learning, and computer vision have made it possible to design machines that can read facial expressions, process speech, and maintain conversational context.

Several well-known platforms illustrate how quickly this field is evolving. The humanoid robot Pepper, created by SoftBank Robotics, was designed specifically to recognize human emotions and interact with customers in retail and hospitality environments. Another example is PARO, a therapeutic robotic seal widely used in elder care facilities. Studies have shown that interacting with PARO can reduce stress and improve emotional well-being among patients with dementia.

In educational settings, robots like NAO and Moxie are used to help children develop social skills, especially in therapy for autism spectrum disorders. These robots rely heavily on their ability to interpret emotional signals and respond appropriately.

But teaching a machine to understand emotions is not the same as teaching it to recognize objects. Emotions are ambiguous, culturally dependent, and highly contextual. This is where social media data becomes incredibly valuable.

Social Media as a Massive Emotional Dataset

Social media platforms collectively represent one of the largest datasets of human communication ever created. Every day, billions of users share messages, comments, reactions, videos, and images that capture their emotional states.

Unlike controlled research datasets, these interactions are spontaneous and authentic. People argue, joke, complain, celebrate, and support each other in real time. For researchers trying to model human emotion computationally, this kind of data is invaluable.

Consider Twitter (X), where users post hundreds of millions of short messages daily. These messages often contain clear emotional signals: frustration about a delayed flight, excitement about a sports victory, grief after a public tragedy. Similarly, communities on Reddit host deeply personal conversations where users discuss mental health, relationships, career struggles, or major life events.

The diversity of these interactions provides training data for algorithms that must interpret emotional meaning in text.

A researcher studying empathy in AI once summarized the challenge succinctly:

“If robots are going to interact with humans naturally, they must learn from the same messy, emotional communication humans use with each other.”

Social media provides exactly that.

Teaching Machines to Recognize Emotion

Training empathetic robots requires multiple layers of artificial intelligence working together. At the core are models designed to interpret language and emotional signals.

One foundational technique is sentiment analysis, which classifies text according to emotional tone. Early sentiment analysis systems could only distinguish between positive and negative sentiment. Today’s models can identify far more nuanced emotions.

A major milestone in this area was the GoEmotions dataset, developed by researchers at Google Research. The dataset contains tens of thousands of Reddit comments labeled with 27 distinct emotion categories, including admiration, curiosity, disappointment, grief, and amusement. This type of annotated data allows machine learning models to detect subtle emotional signals that go far beyond simple positive or negative sentiment.

Modern systems typically rely on transformer-based neural networks, such as BERT or GPT-style architectures, which analyze the context surrounding words in a sentence. These models are capable of recognizing that the phrase “That’s just great” might express sarcasm depending on context.

In addition to textual data, social media provides other valuable signals. Emojis, for example, act as emotional markers that help researchers interpret the intended tone of a message. Images and videos provide facial expressions, body language, and visual context that can be used to train computer vision systems.

When combined, these data sources help robots develop a richer understanding of how humans express emotion.

Why Empathy Matters in Human–Robot Interaction

A robot that can perform tasks efficiently is useful. A robot that can understand emotional context is far more valuable in situations where humans expect social interaction.

Healthcare is one of the clearest examples. In hospitals and elder care facilities, robots are increasingly used to assist patients, remind them to take medication, or provide companionship. If these machines can detect signs of distress, loneliness, or confusion, they can respond more appropriately.

Researchers studying therapeutic robotics have observed that patients often respond emotionally to machines that appear attentive. Even simple responses — adjusting tone, asking follow-up questions, or expressing concern — can significantly improve the quality of interaction.

Customer service is another environment where empathetic AI could make a major difference. Many companies already use chatbots for support, but these systems often fail when customers express frustration or sarcasm. A robot trained on real conversational data from social media may be better equipped to recognize these emotional cues.

Education also presents interesting possibilities. Robots used as learning companions could adapt their responses based on a student’s emotional state — encouraging them when they struggle or celebrating achievements when they succeed.

The Ethical Challenges of Using Social Media Data

Despite its potential, using social media data to train AI systems raises important ethical questions.

One major concern is privacy. Even when data is publicly available, users may not expect their posts to be used in machine learning research. Responsible researchers typically rely on anonymized datasets and follow strict ethical guidelines when collecting and processing data.

Another challenge is bias. Social media does not represent society evenly. Certain demographics are overrepresented on specific platforms, while others are underrepresented. If robots are trained primarily on data from a limited cultural context, they may struggle to interpret emotions accurately in different communities.

For example, expressions of humor, sarcasm, or politeness vary widely across cultures. A phrase that signals frustration in one culture might be interpreted differently in another. Without diverse training data, robots could misinterpret emotional signals.

There is also the issue of toxic content. Social media platforms contain large amounts of harassment, misinformation, and aggressive language. Training AI models without proper filtering could inadvertently teach machines undesirable behaviors.

As a result, many research teams focus heavily on data curation, carefully selecting and labeling datasets that emphasize constructive social interactions.

The Future of Empathetic Machines

The use of social media data in robotics is still evolving, but the trajectory is clear. As artificial intelligence models continue to improve, robots will become increasingly capable of interpreting emotional context and responding in more natural ways.

Future systems will likely combine multiple sources of data — social media conversations, recorded dialogues, facial expression datasets, and real-world interactions — to build more sophisticated models of human emotion.

Some researchers even envision robots that continuously learn from ongoing human interactions, refining their emotional intelligence over time.

Ultimately, the goal is not to replace human empathy, but to build machines that can interact with people in ways that feel supportive and respectful. In environments like healthcare, education, and social support, such technology could have a profound impact.

Teaching robots empathy may sound like science fiction, but in many ways the training has already begun — quietly, across billions of conversations happening every day on social media.